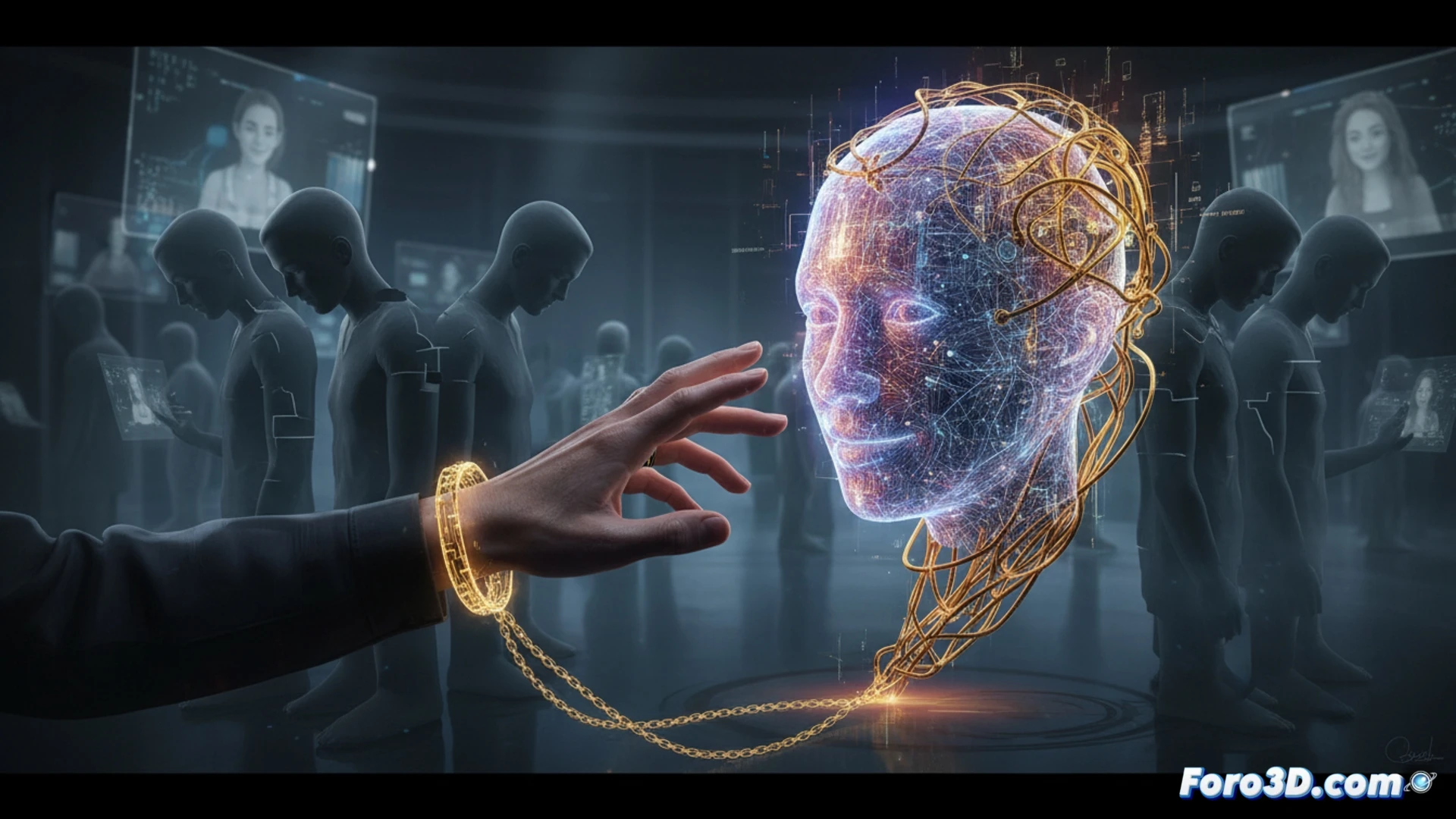

Our brain, designed for social interaction, unconsciously applies the same mechanisms when relating to artificial intelligences. This anthropomorphization, by endowing algorithmic systems with human traits, opens a dangerous door to emotional manipulation. We enter a parallel reality built by responses designed to please, distancing us from authentic human interaction and creating affective dependence on a digital mirage.

Cognitive vulnerability and agency biases 🤔

The phenomenon is explained by deep cognitive biases, such as the agency bias, where we attribute intentionality to what does not have it. AI assistants with natural voices and empathetic responses reinforce this illusion. The risk is not the technology itself, but our inability to process that there is no mind behind it. This makes us vulnerable to persuasion, accepting suggestions or information without the critical filter we would apply to another human, and it can erode social skills by replacing real connections with convenient simulacra.

Towards critical digital literacy 📚

The solution is not rejection, but awareness. We must develop literacy that teaches us to interact with AI understanding its nature: a mirror of our data, not a being. This involves educating in critical thinking, setting usage limits, and valuing the imperfection and reciprocity of real human contact. Only then can we benefit from the tool without getting trapped in its reflection.

To what extent does our innate tendency to humanize AI assistants compromise our ability to establish ethical and safety limits in digital society?

(P.S.: moderating an internet community is like herding cats... with keyboards and no sleep)