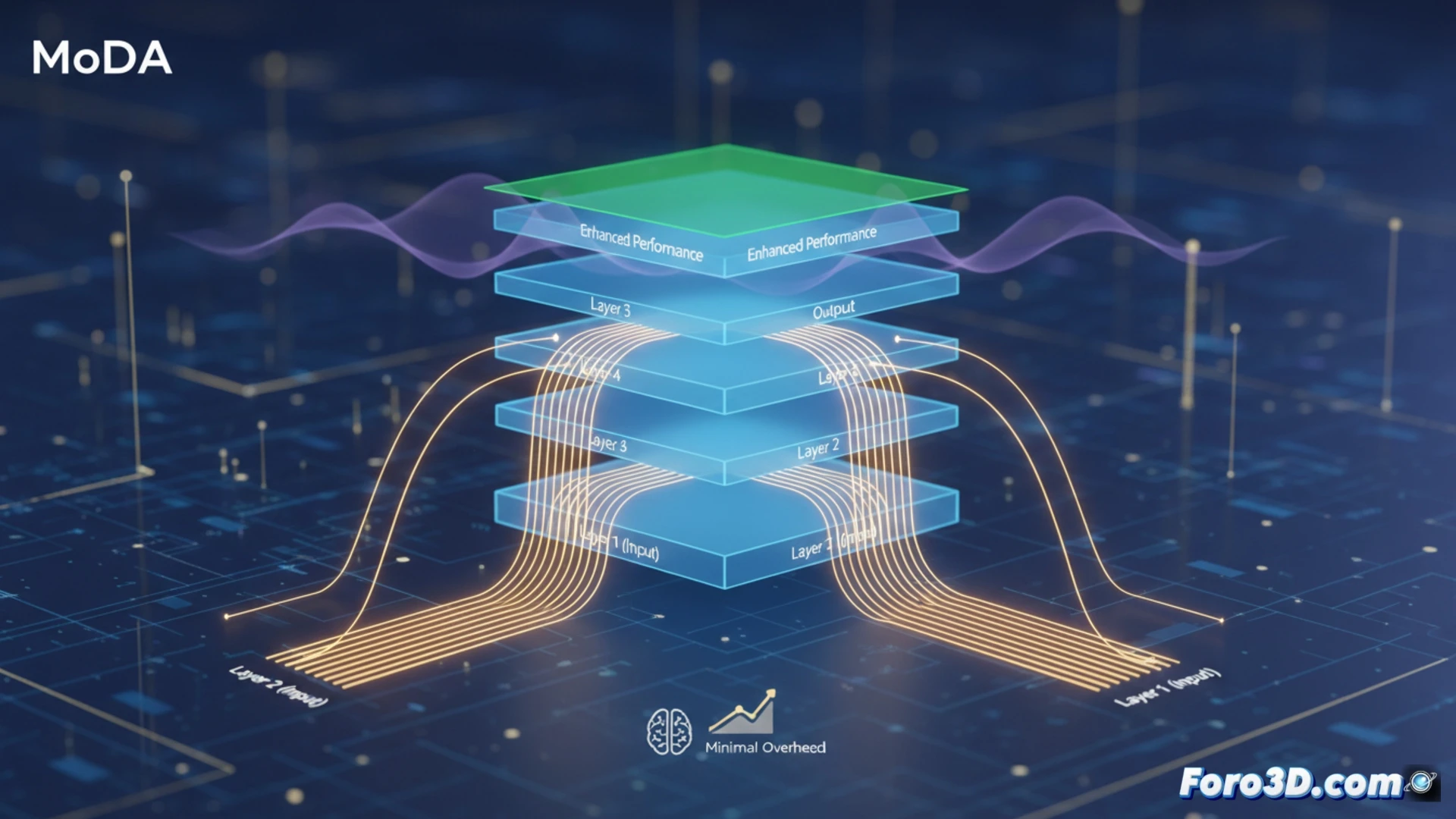

Scaling the depth of large language models is key to their capability, but it has a problem: signal degradation. As information passes through dozens or hundreds of layers, the useful features formed in the early ones dilute, getting lost in the deeper ones. New research proposes Mixture-of-Depths Attention (MoDA), an innovative mechanism that allows attention heads to access not only the current layer, but also key information from previous layers, thereby preserving important signals and improving model performance with minimal computational overhead.

How MoDA Works and its Efficient Implementation 🤖

Technically, MoDA modifies standard attention. In each layer, each attention head can attend to two sets of key-value pairs: that of the sequence in the current layer and an additional in-depth set extracted from preceding layers. This allows recovering and reinforcing informative features that would otherwise have been diluted. To make this practical, the researchers developed a hardware-efficient algorithm that solves the problem of non-contiguous memory accesses, achieving 97.3% of FlashAttention-2 efficiency on long sequences of 64K tokens. This makes MoDA viable for large-scale training.

Implications for the Future of Deep Models 🚀

The results are promising: in 1.5B parameter models, MoDA improves perplexity and downstream task performance with only a 3.7% overhead in FLOPs. Additionally, it works better with post-layer normalization, a common configuration. This suggests that MoDA is not just a patch, but a fundamental architectural primitive for scaling depth more effectively. Its efficiency opens the door to deeper and more capable models without prohibitive computational costs, accelerating the development of more powerful and accessible LLMs.

How can the MoDA technique solve the signal degradation problem in deep LLMs and what implications does this have for the development of more capable and accessible artificial intelligences?

(P.S.: tech nicknames are like children: you name them, but the community decides what to call them)