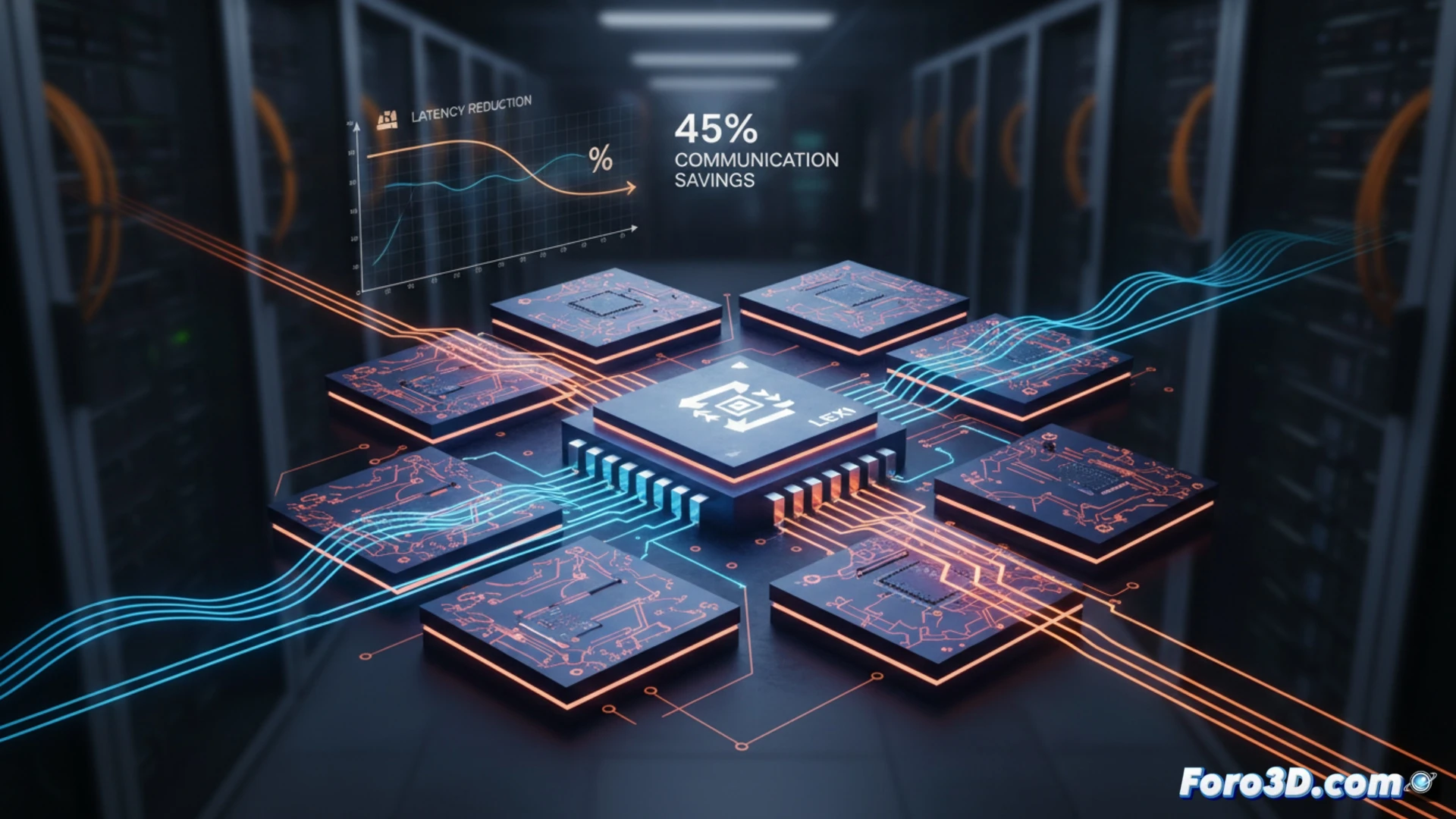

Inference in large language models (LLMs) is hindered by the data movement bottleneck between chiplets. Since these models predominantly use the BF16 format, an analysis reveals that exponent flows have very low entropy, below 3 bits, making them highly compressible. We present LEXI, a lossless compression scheme for exponents based on Huffman, which operates directly in the on-chip network (NoC). By compressing activations, caches, and weights, LEXI reduces communication and total inference latency by 33-45% and 30-35%, respectively, in homogeneous chiplet architectures, with minimal area and energy cost.

LEXI Codec Architecture and Implementation in the NoC 🧠

LEXI is integrated directly into the on-chip network routers. Small codecs are placed in the input and output ports, compressing and decompressing the exponents of BF16 data on the fly. The key is its efficient hardware implementation: it uses multi-line lookup table (LUT)-based decoders that maintain the maximum link bandwidth, preventing compression from introducing delays. Weights are stored compressed in memory and decompressed just before computation in the tensor core. Fabricated in 22 nm GF technology, the overhead of this system is only 0.09% in area and energy consumption, a marginal cost for substantial system performance gains.

Impact on the Future of 3D Semiconductor Design for AI 🚀

LEXI transcends a simple compression technique; it represents a paradigm shift in software-hardware co-design for AI. By targeting the inherent redundancy in numerical formats at the data link level, it enables more scalable and efficient chiplet architectures. This approach mitigates one of the biggest current limitations: interconnection bandwidth saturation. For the 3D microfabrication niche, LEXI sets a clear precedent: innovation lies not only in stacking more transistors or chiplets, but in intelligently optimizing every bit that travels between them, unlocking new levels of performance in LLM inference.

How can the LEXI exponent compression technique optimize data transfer between chiplets to reduce latency in LLM inference?

(P.S.: simulating a 200mm wafer is like making a pizza: everyone wants a slice)