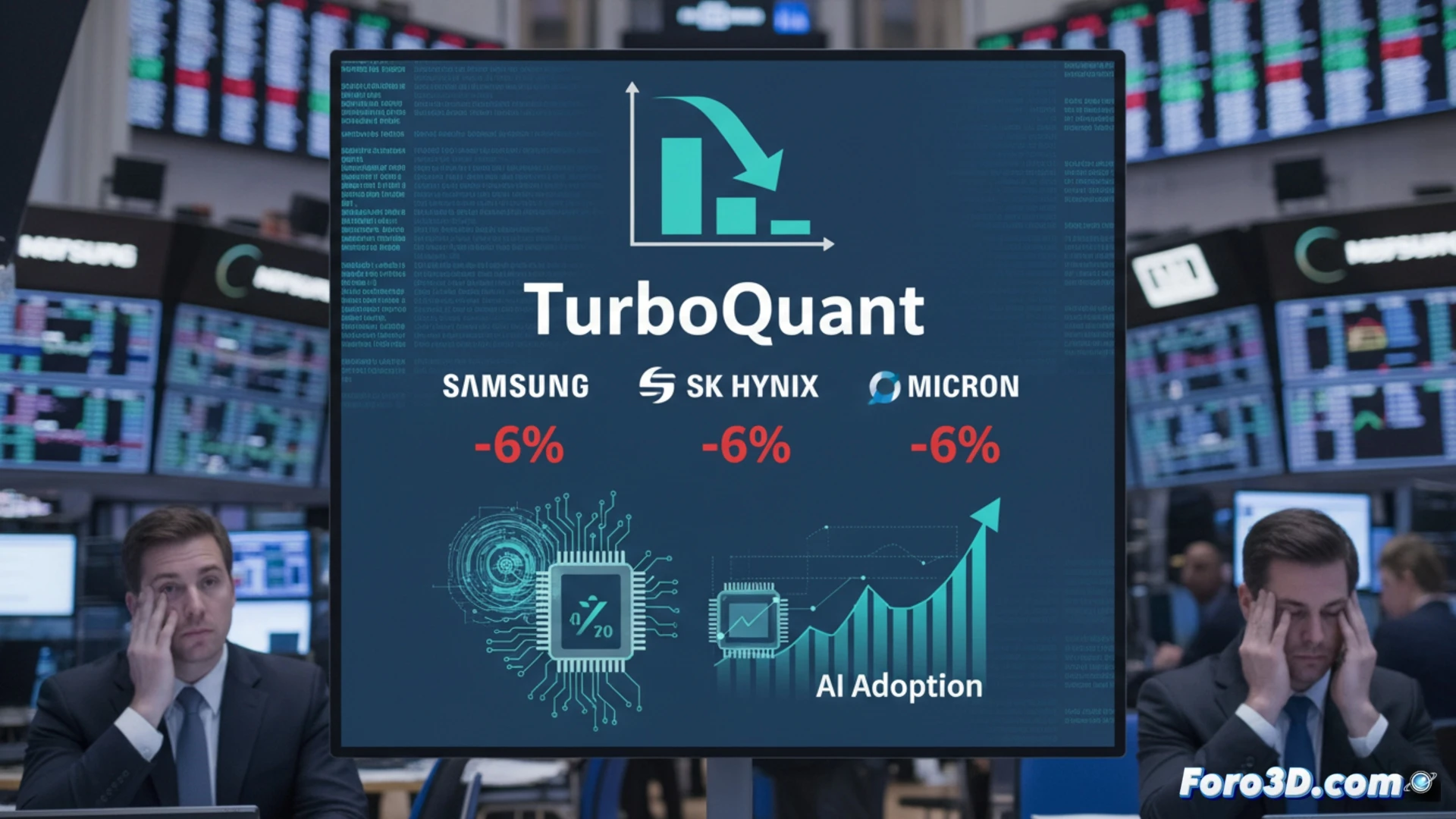

Google has unveiled TurboQuant, a language model compression technique that reduces the memory needed for AI inference by up to six times without loss of accuracy. The announcement triggered an immediate stock drop, close to 6%, in memory giants like Samsung, SK Hynix, and Micron. The market's fear is clear: if software becomes radically more efficient, the projected demand for DRAM and HBM memory hardware, key for AI, could contract significantly.🚀

Technical implications: less HBM per chip, more chips per wafer💡

Visualizing an AI processor as a GPU, its architecture relies on high-bandwidth HBM memory stacks, an expensive and complex component to manufacture. TurboQuant, by compressing the model weights, reduces the need to store them in that HBM during execution. This could translate into future designs with fewer memory stacks per chip or lower capacities, freeing up silicon space and reducing material costs. At production scale, lower demand per unit could mean that a semiconductor wafer yields more final chips, altering capacity calculations for foundries and memory manufacturers.

Short-term panic versus structural opportunity🤔

The stock reaction reflects fear of disruption, but the long-term outlook is more nuanced. More efficient and cost-effective AI inference lowers the entry barrier, boosting massive adoption in devices and services. This proliferation could generate greater total memory demand, though distributed across more applications and possibly different chip types. The semiconductor industry must adapt: value will no longer lie solely in selling gigabytes, but in integrating memory and processing solutions optimized for compressed and efficient models.

How could model compression like Google's TurboQuant drive the adoption of high-density memories and 3D-IC architectures in AI hardware?

(P.S.: modeling a chip in 3D is easy, the hard part is making it not look like a Lego city)