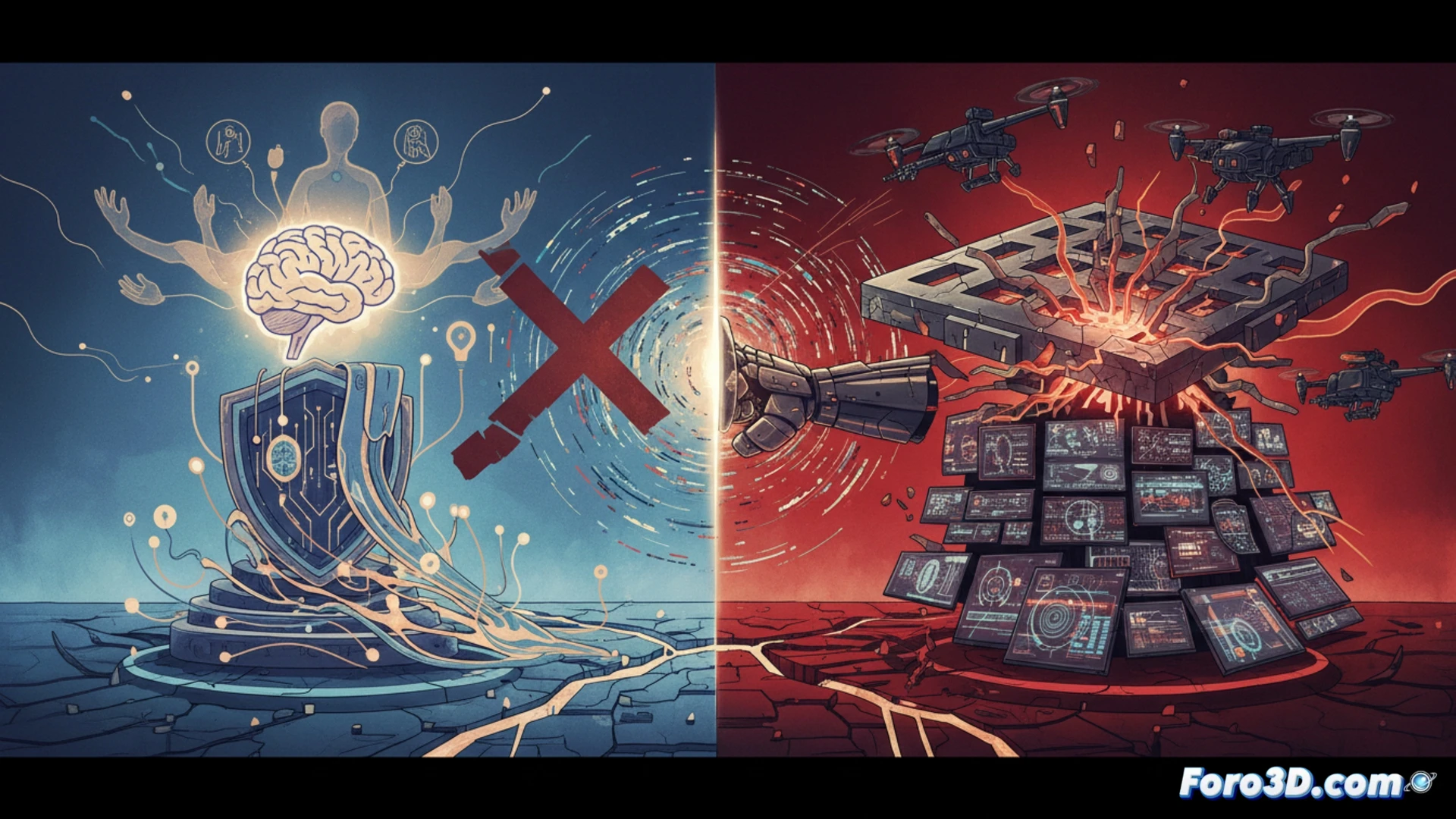

In an unprecedented move, the U.S. Department of Defense has designated the artificial intelligence company Anthropic as a risk to the national security supply chain. This measure, typically reserved for foreign adversaries, prohibits the company and its partners from working with the government. The conflict stems from an irreconcilable ethical clash: Anthropic refused to allow the use of its AI for mass surveillance or autonomous weapons, defying Pentagon demands and jeopardizing its own economic viability.

Designation as a supply chain risk: legal and operational implications ⚖️

The official designation as a national security supply chain risk is no minor sanction. It is a powerful legal instrument that effectively excludes Anthropic from any contracts with the Department of Defense and other key agencies. This goes beyond a simple bid rejection; it is a broad prohibition that extends to its business partners, isolating the company from a gigantic market. Anthropic's decision to challenge the measure in court will create a crucial precedent. The judicial case will define the limits of governmental power to punish companies that, for self-declared ethical reasons, refuse to collaborate on national security projects, and will test corporate autonomy in the development of dual-use technologies.

A dangerous precedent for the AI industry ⚠️

This case transcends Anthropic and sets an alarming precedent for the entire technology industry. It signals that prioritizing rigid ethical principles, especially in sensitive areas like lethal autonomy or surveillance, can entail an existential economic cost. The Pentagon's measure could force other startups to choose between their moral compass and their commercial survival, hindering responsible innovation and pushing the development of critical AI toward less scrupulous actors or rival powers. The resulting legal battle will define the future of ethical AI governance and the fragile balance between state security and corporate freedom of conscience.

To what extent should national security be prioritized over ethics in the development of advanced artificial intelligence?

(P.S.: trying to ban a nickname on the internet is like trying to cover the sun with a finger... but in digital)