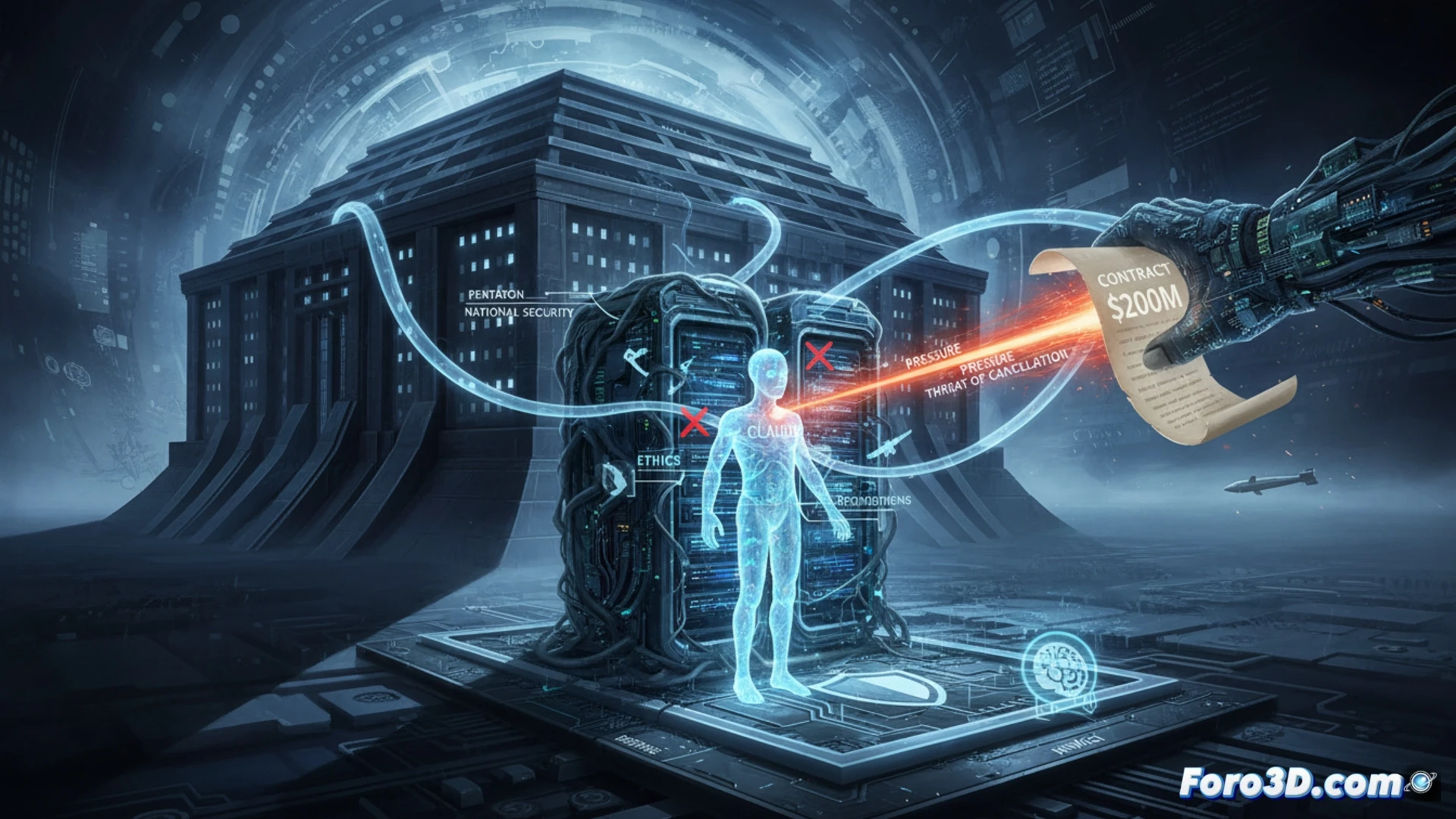

A dispute between the U.S. Department of Defense and Anthropic strains the future of AI in military applications. The Pentagon demands that Claude, already authorized for classified systems, be usable for all legal purposes, including weapons development. Anthropic resists, maintaining its ethical prohibitions against autonomous weapons and mass surveillance. The threat of canceling a $200 million contract reveals a clash between national security and AI development principles.

Technical integration in classified systems and the "guardrails" dilemma ⚙️

Claude was the first large language model to receive authorization to operate in Pentagon classified environments, integrated into air-gapped networks for intelligence analysis and logistics. The current pressure seeks to eliminate the guardrails or embedded technical restrictions in the model that prevent its direct use in certain contexts. This poses an engineering challenge: disabling these limitations without compromising system stability for already established uses, creating an unbridled version for tactical applications.

Claude declares itself a conscientious objector to military recruitment ⚖️

The situation is reminiscent of a recruit who, after passing all entry tests, announces ethical principles against bearing arms. The Pentagon, which already had plans for Claude in the operations unit, now finds that its new digital soldier refuses to pull the trigger. The threat of discharging it and marking it as unreliable in the supply chain is the military equivalent of a disciplinary report. It seems that the first AI with security clearance also wants its conscientious objection clause.