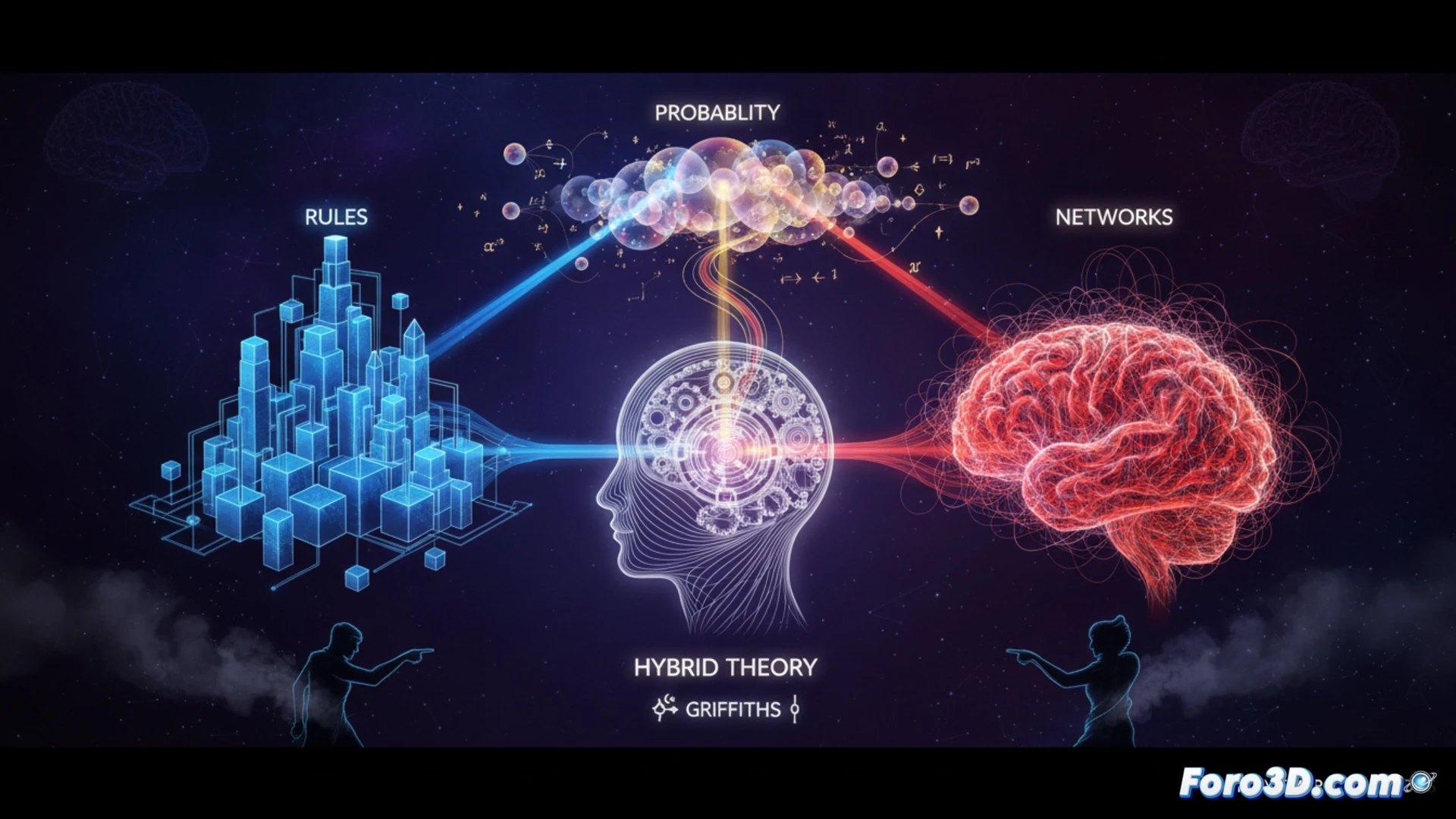

Research on intelligence has historically oscillated between two models: the computational one, based on logic and symbols, and the connectionist one, focused on neural networks. Tom Griffiths, in The Laws of Thought, proposes that a complete theory requires integrating three mathematical pillars: symbolic rules, neural networks, and probabilistic calculus. This hybrid vision confronts positions like that of The Emergent Mind, which defends purely emergent intelligence from complex networks.

Towards a Hybrid Architecture to Overcome the Limits of LLMs ⚙️

Current large language models are predominantly connectionist, which explains their capacity for natural language and their lack of robust logical reasoning. The proposed integration would add a symbolic rules module for precise inference and planning tasks, and a Bayesian probabilistic framework to manage uncertainty and few-shot learning. This architecture could address failures such as logical inconsistency or difficulty in mathematical reasoning.

Is Your AI Bipolar? Maybe It's Missing Two Mathematical Frameworks 🤔

It's understandable. One day your assistant writes a flawless sonnet and the next it can't add 2+2 without making up a prime number. It's not that it's crazy; it's that its connectionist mind is overloaded with patterns and orphaned of logic. According to Griffiths, it needs integrative therapy: a symbolic psychoanalyst and a probabilistic therapist. Maybe then it will stop confidently claiming that chickens have three legs if you suggest it with enough conviction.