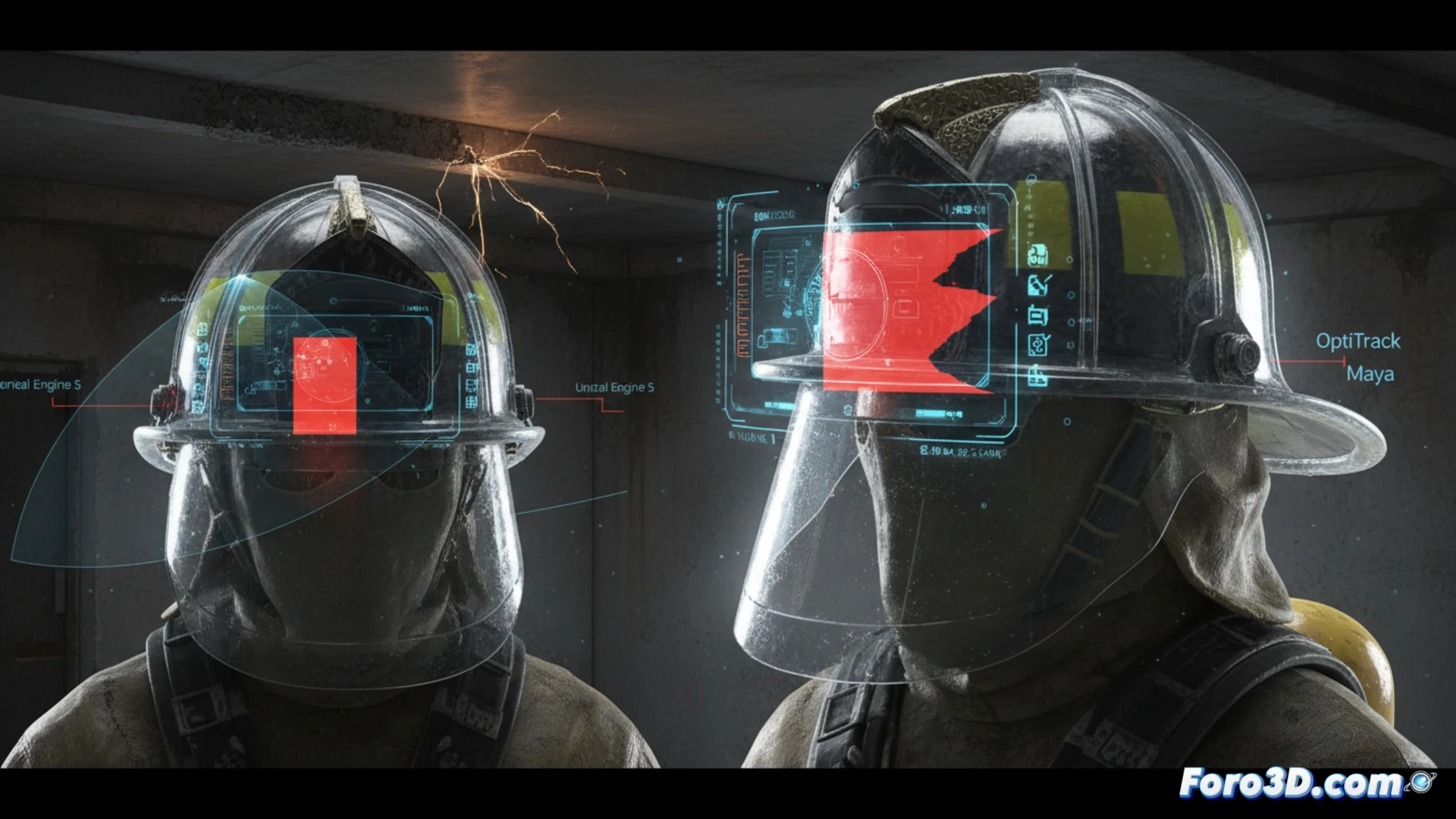

A recent accident in a rescue drill has put the safety of Augmented Reality interfaces in hostile environments under scrutiny. A firefighter, equipped with a helmet featuring an AR display, failed to detect an obstacle that his own HUD was hiding in his periphery. The recreation of the incident in Unreal Engine 5, using motion capture data from OptiTrack, revealed that a critical status panel blocked exactly the area where the danger occurred.

3D Recreation of the Failure: Unreal Engine 5 and OptiTrack as Forensic Tools 🛠️

To demonstrate the magnitude of the problem, we modeled the scene in Maya and imported it into Unreal Engine 5. We used OptiTrack data to accurately replicate the position of the firefighter's head and helmet during the incident. The result was chilling: the temperature and pressure overlay, designed to be static in the upper right corner, invaded 35% of the visual field when the user turned their head to inspect a beam. The simulation showed that, in the split second before the impact, the AR interface acted like a digital blind, eliminating the visual information needed to assess the distance.

Towards Adaptive Design: Transparency and Dynamic Relocation 💡

The solution is not to eliminate information, but to manage its spatial hierarchy. I propose three viable improvements: adaptive transparency that increases opacity only when the user fixes their gaze on the element, dynamic relocation that shifts panels to the extreme edges of the viewport when rapid head movement is detected, and an occlusion alert system that projects a semi-transparent outline of the real obstacle over the HUD. These changes, verifiable with OptiTrack and Maya, could turn a dangerous HUD into an assistant that never steals your sight from danger.

Considering the accident in the rescue drill, where the HUD's visual occlusion failed, how should design protocols be rethought to ensure that critical information does not obscure real environmental hazards?

(PS: AR applied to maintenance lets you see where the fault is... before the machine explodes.)