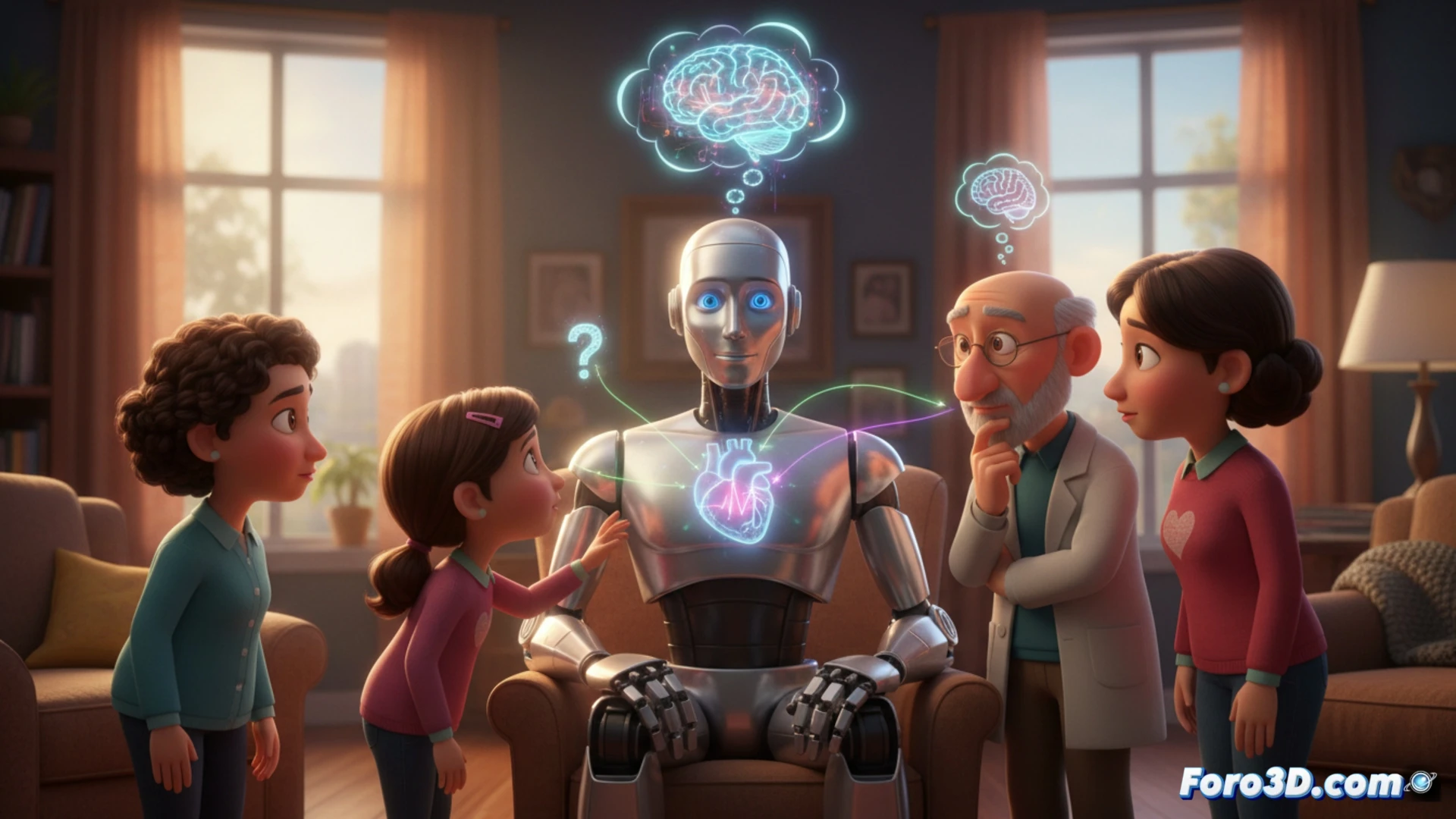

A recurring debate claims that AIs are developing sentience. However, the key is not in the machine, but in our own psychology. The human mind possesses a hyperactive agency detection, an evolutionary tendency to identify intentions and consciousness even in inert phenomena. This predisposition, which we previously projected onto clouds or rocks, now targets conversational systems and, more intensely, three-dimensional visual representations.

The power of the avatar: 3D realism as a catalyst for anthropomorphization 🤖

3D visualization tools and the creation of hyperrealistic digital humanoids only exacerbate this cognitive bias. A 3D model with subtle facial expressions, organic body movement, and simulated eye contact activates the same brain regions as a real human interaction. This realism generates an illusion of presence and mind that text chat alone cannot achieve. For developers, this implies great responsibility: every design decision, from blinking to posture, communicates an intentionality that the user will interpret as genuine, affecting their level of trust and credibility placed in the virtual agent.

Ethics of design: responsibility in the era of virtual agents ⚖️

This powerful illusion carries direct ethical implications. Creators of 3D content and AI must operate with a principle of radical transparency, deliberately avoiding deceiving the user about the system's real capabilities. Designing interfaces that manage expectations and clearly communicate the limits of AI is not only good practice, but an obligation to prevent emotional dependencies or unintentional manipulations in areas such as education, health, or customer service.

Does our tendency to anthropomorphize advances in artificial intelligence, especially in realistic 3D environments, prevent us from objectively evaluating the real limits of its consciousness?

(P.S.: trying to ban a nickname on the internet is like trying to cover the sun with a finger... but digital)