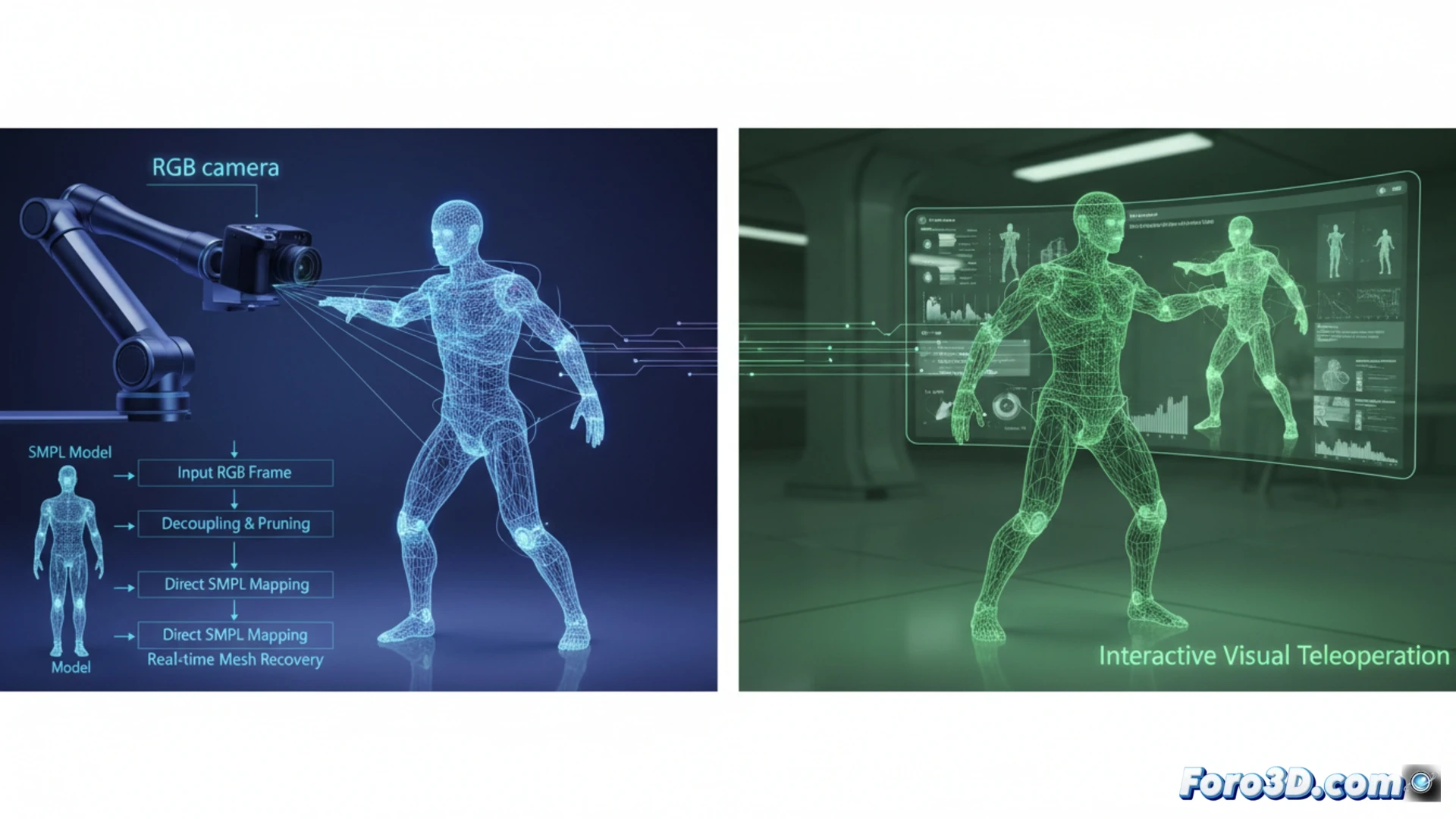

Precise 3D body mesh recovery from a single RGB camera is crucial for animating digital humanoids, but current methods like SAM 3D Body are too slow for interactive applications. We present Fast SAM 3D Body, an acceleration framework that, without retraining, reformulates inference to achieve real-time rates. By decoupling dependencies and applying pruning, it enables parallel feature extraction and optimized decoding. Most importantly, it replaces iterative mesh fitting with a direct mapping, accelerating the conversion to SMPL parameters by more than 10,000 times. This enables, for the first time, real-time visual teleoperation of a humanoid. 🚀

Technical Breakdown: Parallelization, Pruning, and Direct Mapping ⚙️

The core of the acceleration lies in three innovations. First, the original process's serial spatial dependencies are decoupled, enabling parallel feature extraction from multiple image crops. Second, architecture-aware transformer pruning is applied to drastically reduce decoding latency. The third and most impactful for humanoid applications is replacing the slow iterative mesh fitting (optimization) with a direct feedforward mapping from encoder features to SMPL parameters. This direct conversion, compatible with humanoid control frameworks, is the key that accelerates that specific stage by more than four orders of magnitude, maintaining comparable and even superior fidelity on benchmarks like LSPET.

The Future of Humanoid Animation and Control 🤖

This advance goes beyond the technical, opening immediate practical doors. The ability to obtain SMPL kinematics in real-time from a single RGB stream enables humanoid teleoperation without vests or wearable sensors, greatly simplifying motion capture for animation. Additionally, it allows direct collection of manipulation policies for reinforcement learning, where the humanoid can learn by observing human actions in video. Fast SAM 3D Body brings closer the vision of interactive and realistic digital humanoids, visually controlled and learning from us naturally.

How can Fast SAM 3D Body overcome latency and accuracy limitations in motion capture for real-time control of digital humanoids in production environments?

(P.S.: Digital humanoids have the advantage that they never complain about the rigging.)