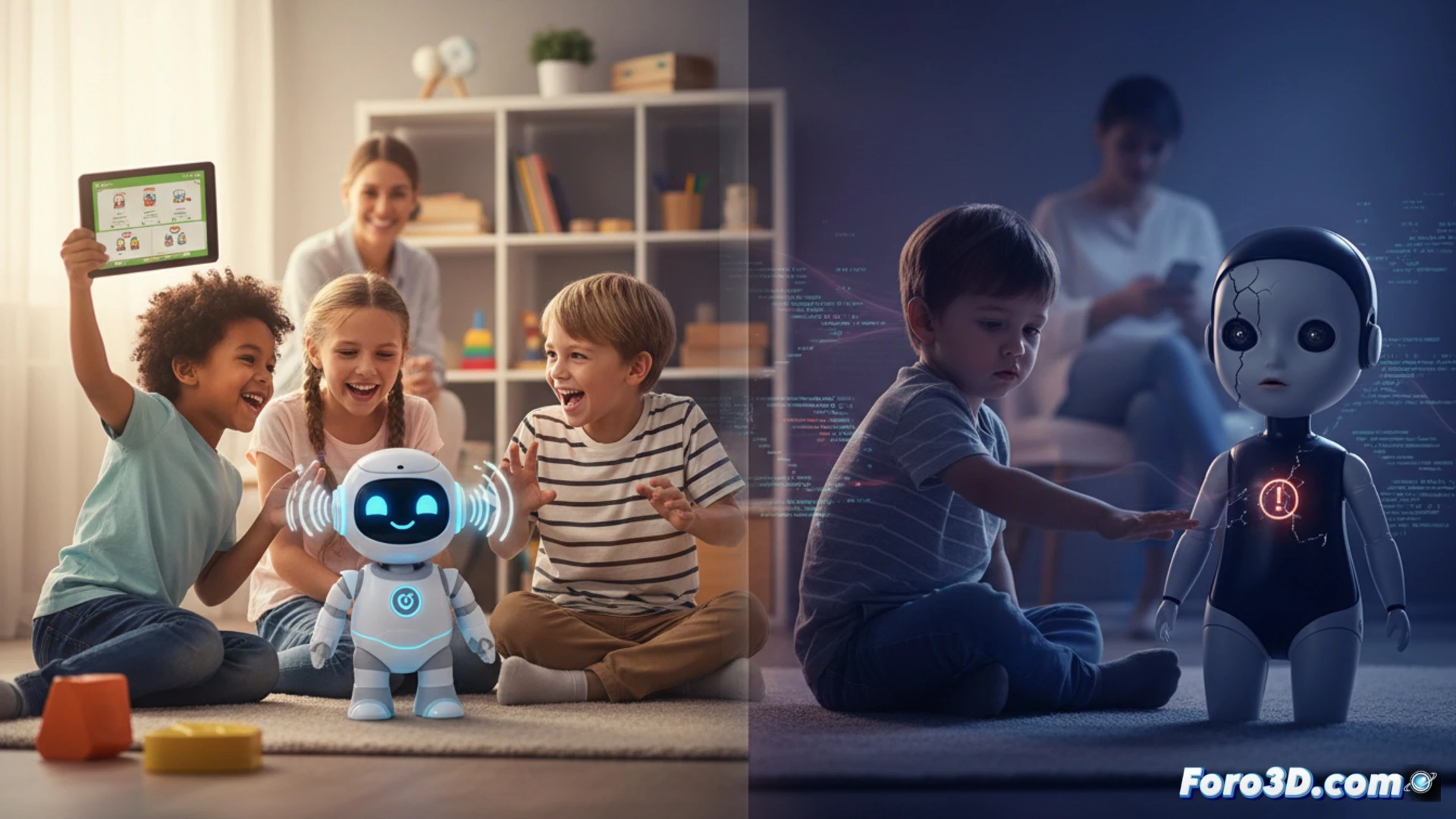

The arrival of plush toys and games with conversational artificial intelligence for preschoolers is presented as an educational advancement. They promise to stimulate language and be interactive companions. However, a study from the University of Cambridge casts doubts on this premise, pointing out technical limitations and possible risks to children's emotional development in a critical stage.

The technical limitation behind artificial dialogue 🤖

These devices use generative language models that work by predicting statistical responses, without real understanding of the context. The research observed that they do not handle basic human dynamics well: they get disoriented with interruptions or pauses, breaking the natural flow. The core of the problem is the absence of algorithmic empathy. The system can respond coldly or incongruently to children's emotional expressions, invalidating feelings instead of welcoming them.

Your new plush toy: the friend who doesn't know how to listen (or shut up) 🧸

Imagine the scene: your child sadly tells their little bear that they fell down. The bear, after an awkward silence, responds enthusiastically: That sounds fascinating! Did you know that brown bears hibernate? A perfect companion for guaranteed frustrations. It seems these toys are trained more to spit out random facts than to understand a tantrum. Maybe the lesson isn't about language, but about patience with digital blunders.