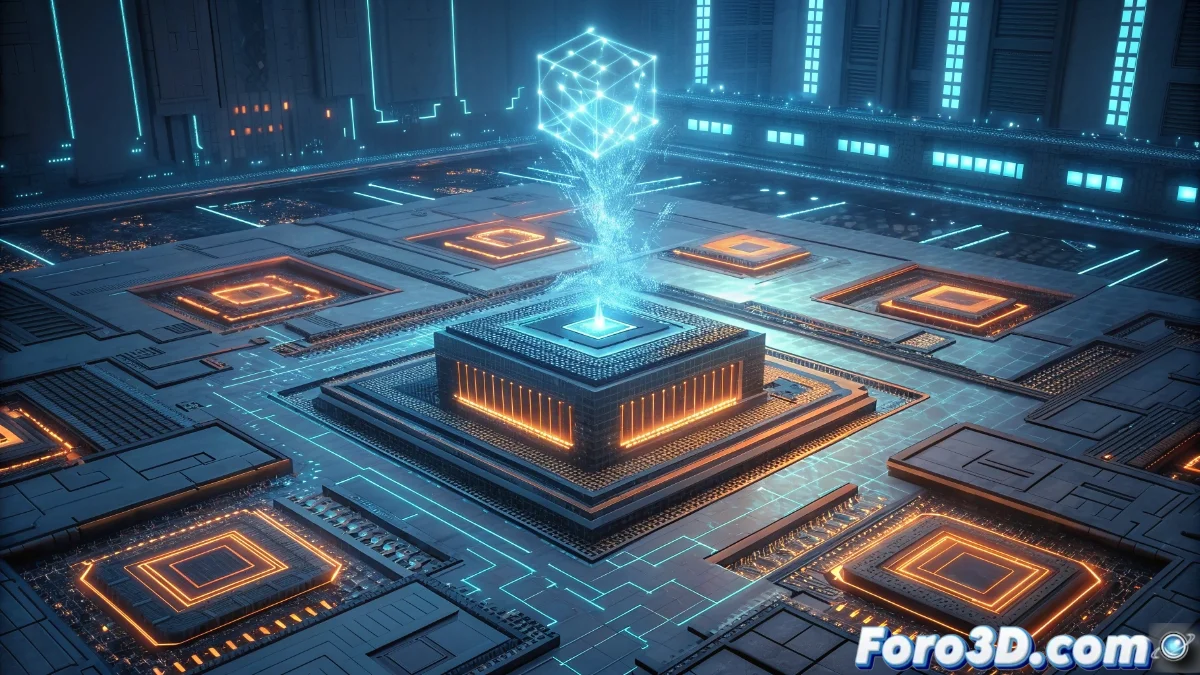

Tensor Processing Units Revolutionize Artificial Intelligence Hardware

Tensor Processing Units represent a transcendental evolution in the development of specialized hardware for artificial intelligence applications. Google's fourth-generation TPU is specifically designed to maximize efficiency in matrix and tensor operations that dominate calculations in deep neural networks 🚀.

Architecture Optimized for Artificial Intelligence

These specialized processors are meticulously designed to execute matrix multiplication operations and convolutions, which form the computational core of deep learning model training. They incorporate ultra-wide bandwidth memory and matrix computation units capable of processing thousands of operations simultaneously in parallel.

Key advantages of specialization:- Exponentially superior performance compared to conventional CPUs and GPUs in machine learning tasks

- Ability to handle complex models that would require months of processing on traditional hardware

- Drastic reduction in training times from weeks to mere hours or days

The architectural specialization allows models that previously needed months of computation to now be trained in a matter of hours, democratizing access to advanced AI.

Integration into Cloud Ecosystems

These processing units are predominantly implemented through Google Cloud Platform, enabling developers and organizations to access their computational power without initial investments in physical infrastructure. High-speed connectivity between multiple TPUs enables distributed training of massive models.

Main applications enabled:- Advanced research in natural language processing and contextual understanding

- Computer vision systems for real-time image and video analysis

- Recommendation platforms that process petabytes of user data

Current Technological Paradox

The contemporary irony lies in the fact that while these processing units execute extremely sophisticated artificial intelligence algorithms, they still cannot solve seemingly simple dilemmas like selecting optimal entertainment content without extensive human intervention 🎯.