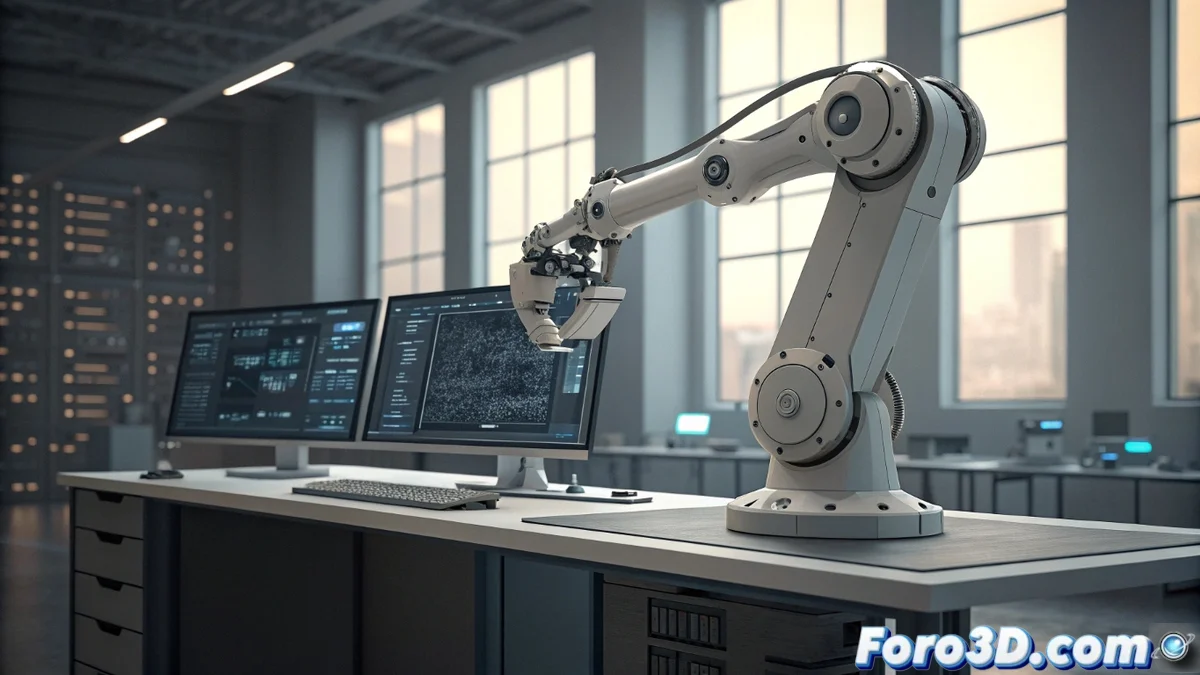

A new framework uses language models to generate and verify robotic code

Robotics takes a step forward with a framework that integrates large language models. This system acts as a static simulator, allowing to predict how a robot will move without running tests in the real world or relying on heavy 3D simulators. 🦾

Benefits for the development community

For forums like foro3d.com, this technique is highly relevant. It allows discussing automating robots with AI and optimizing how the software that controls them is written. Users can share methods to simulate drones or ground vehicles in virtual environments, fostering the exchange of technical knowledge and exploring practical projects without the need for expensive hardware.

Key advantages of the approach:- Save time and resources: Eliminates the need to set up complex physical or virtual testing environments.

- Generate reliable code: Automatically produces corrective instructions for the robot.

- Iterate quickly: Allows testing and refining control algorithms in an abstract space before any implementation.

This method works as an abstract reasoning engine, constantly evaluating conditions and generating narratives of what would happen.

How the system processes instructions

The language model processes high-level commands and translates them into a sequential action plan. It evaluates the environment and predicted internal states, generating precise semantic descriptions of the robot's trajectory. This ability to reason about physics and logical consequences is its core.

Functions of the reasoning process:- Interpret actions: Understands the orders given to the robot.

- Predict state changes: Reasons about how each action alters the environment and the robot's state.

- Detect logical errors: Identifies problems in the plan before executing real code, such as preventing a drone from landing where it shouldn't.

Impact on the future of robotic development

This approach transforms how software for robots is developed. By offering a static simulation environment, it drastically reduces the trial-and-error cycle. The community can now focus on designing complex behaviors and debugging logic in a safe and efficient framework, paving the way for more intelligent and reliable robots. 🤖