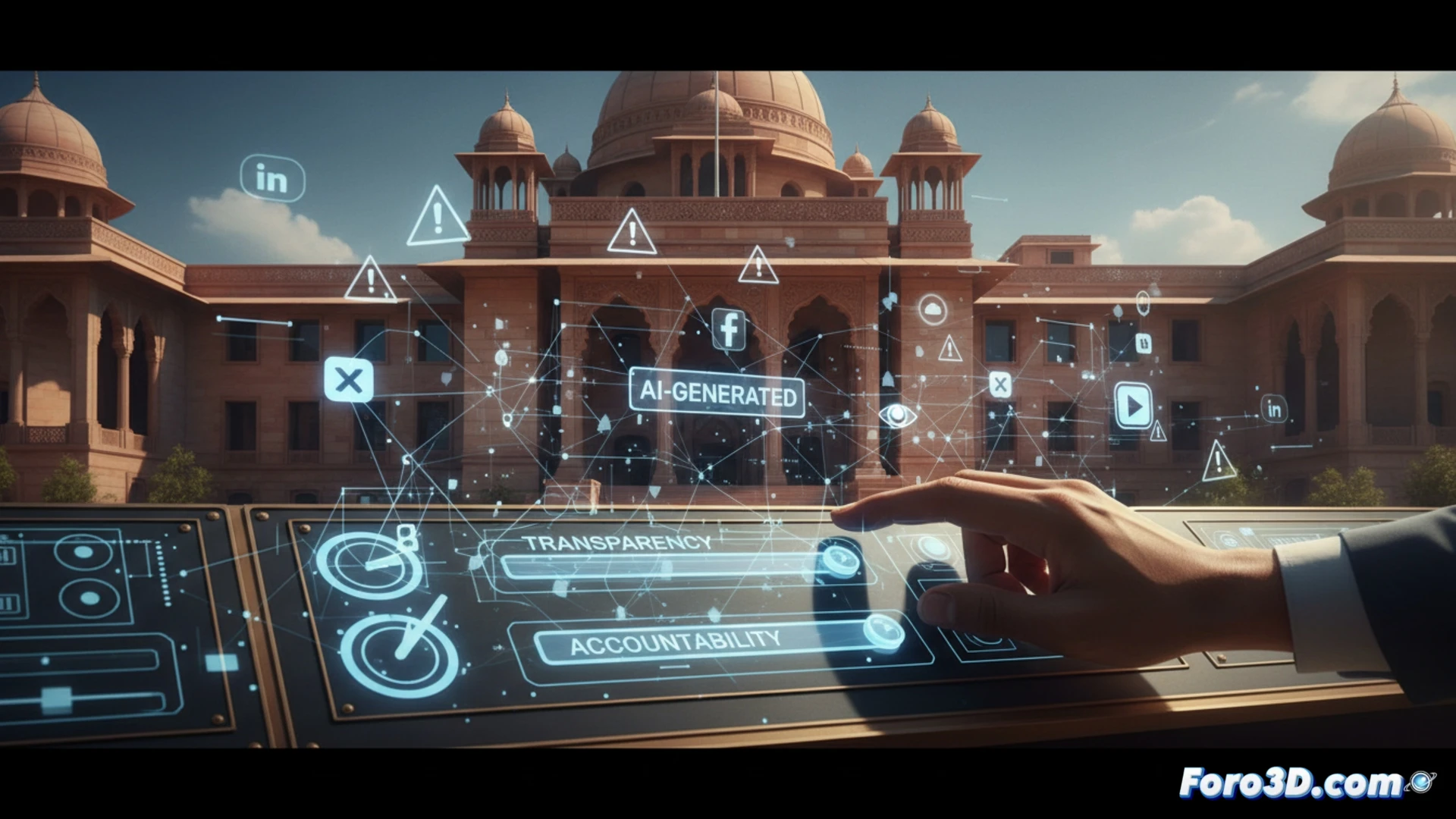

India Strengthens Rules for Artificial Intelligence on Social Media

Indian authorities have modified their internet regulatory framework, imposing new requirements on major social media platforms. The main objective is for them to manage artificial intelligence systems-generated material more rigorously, amid growing alarm over the spread of false information. 🛡️

Greater Obligations for Digital Platforms

Companies now designated as significant intermediaries must act with greater speed and transparency. One of the key rules is the need to clearly identify all synthetic or altered content that is not real. Additionally, they must communicate to their users the usage policies and how they enforce them.

Main Required Actions:- Swiftly remove any illegal material upon official notification.

- Inform users about the limitations and possible errors of AI systems.

- Not evade responsibility by claiming that content was automatically generated.

The legal framework is evolving to try to keep pace with advances in artificial intelligence.

Adapting the Law to the AI Era

These changes are implemented through an amendment to the country's Information Technology Act. The government seeks a balance between fostering technological innovation and protecting citizens from potentially harmful content. The rule emphasizes that the automated origin of content does not exempt the hosting platform from responsibility.

Consequences of the New Approach:- Companies must invest more resources in reviewing and moderating content.

- It sets a precedent for other countries to regulate AI in digital environments.

- It increases pressure on legal and compliance departments.

A New Challenge for Content Moderation

The ability of machines to generate text, images, and video convincingly poses unprecedented legal challenges. India's new rules represent an effort to make platforms take a more active and preventive role. The message is clear: innovation cannot come at the expense of public safety and informational truth. ⚖️