In the forum, there is much talk about cores and frequencies, but a decisive element often goes unnoticed: the processor's cache memory. This integrated, ultra-fast memory, organized in levels, is responsible for feeding data to the cores without constantly relying on RAM. Its design directly influences smoothness, especially in games and demanding software where data accesses are massive and constant.

Cache Hierarchy: From L1 to L3, Minimizing Cache Misses 🧠

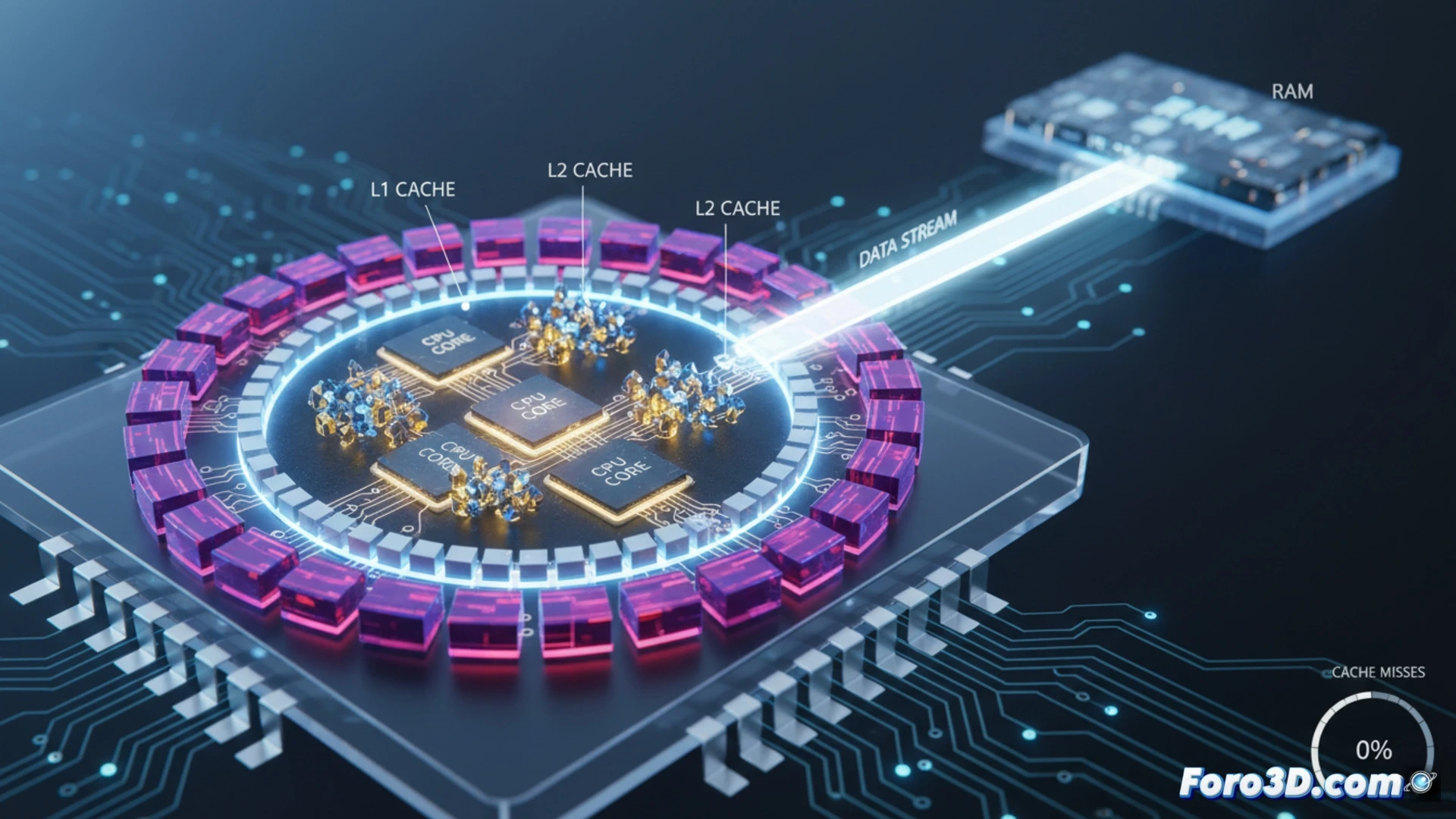

Efficiency lies in the hierarchy. The L1 cache, the smallest and fastest, is dedicated to each core. The L2, with higher latency and capacity, is usually shared by a group of cores. The L3, or shared cache, is the largest and serves the entire chip. When a core needs data, it searches in this order. A cache miss occurs if it doesn't find it, forcing an access to RAM, which is hundreds of cycles slower. A large and well-managed cache reduces these misses.

When Your CPU Has to Go Sightseeing in RAM 🐌

It's the dramatic moment: your processor, accustomed to the speed of its cache, doesn't find what it's looking for. Then it embarks on a slow, heavy journey to the distant lands of RAM memory, a journey that in clock cycles is equivalent to an expedition to the center of the Earth. Meanwhile, the cores stare into space, the FPS hesitate, and you think the problem is the graphics card. The cache is that efficient waiter who prevents the kitchen (the CPU) from having to go to the market (the RAM) for every ingredient.