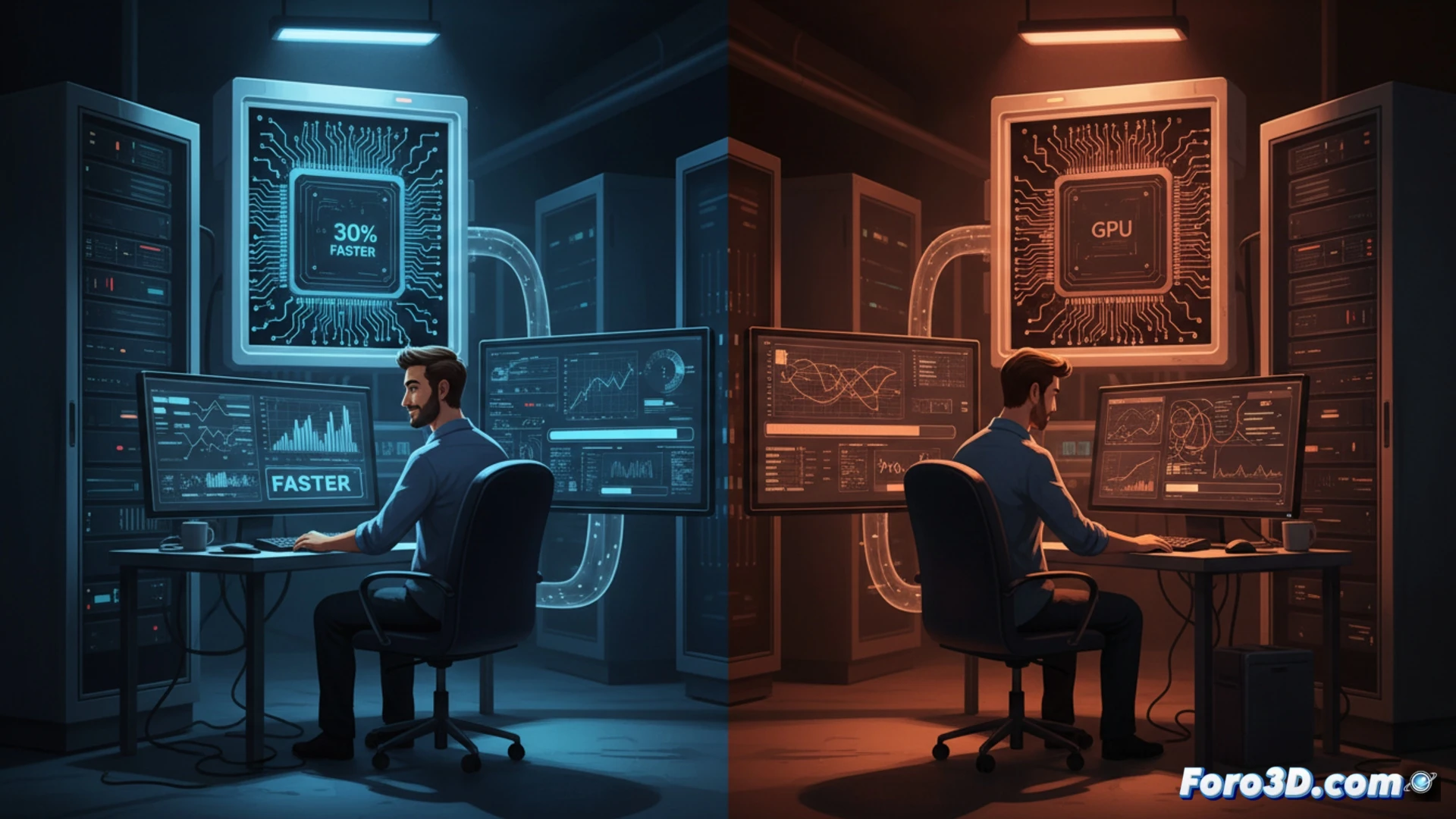

You contract an instance with a specific GPU, but the performance you get is a lottery. Manufacturers classify their chips into categories based on silicon quality, and cloud computing companies assign these GPUs unevenly. This means that the same GPU model can deliver up to 30% less performance in artificial intelligence tasks, affecting both training times and the final cost of the project.

Chip classification and its impact on AI development 🎲

NVIDIA assigns a bin to each GPU based on its energy efficiency and overclocking capability. Higher quality units are allocated to premium customers or high-performance applications, while lower quality ones go to budget instances. This means that two developers with the same contracted instance can have very different experiences: one runs their training in 10 hours, and another, with worse luck, needs 13. The variability is real and difficult to predict without advanced monitoring tools.

The silicon Russian roulette: did you get the good GPU? ⚡

Renting a GPU in the cloud is like buying a lottery ticket, but without the jackpot. You can pay the same as your colleague and end up with a GPU that performs like a 90s calculator. The worst part is you can't complain: the contract says the service is equivalent. So, while some train models in record time, others stare at the progress bar and wonder if it wouldn't be faster to do the calculation by hand.