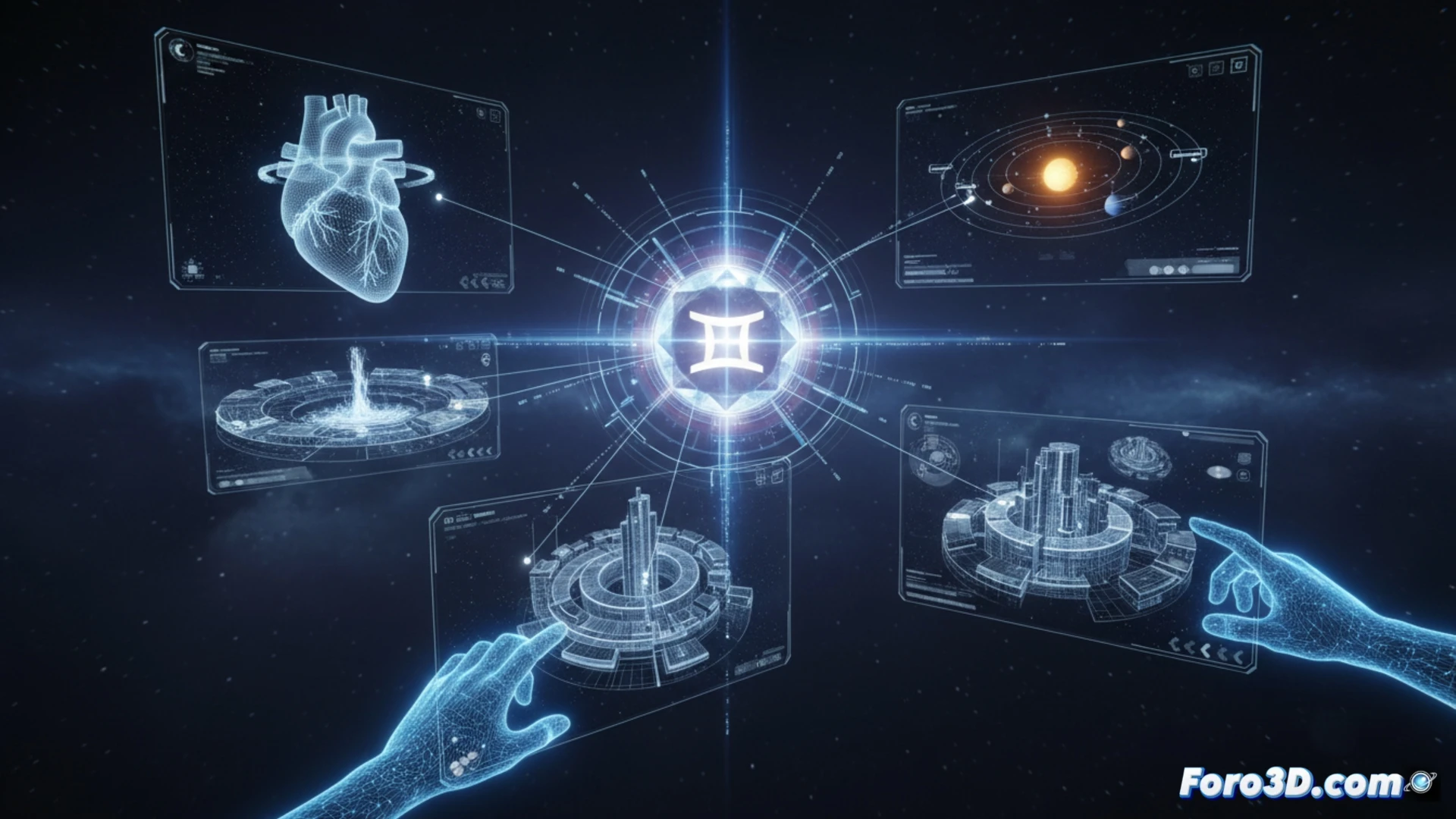

Google has updated its Gemini model with a notable capability: the generation of simulations and interactive 3D models within the chat. This feature allows users to manipulate three-dimensional representations of objects or concepts directly. The goal is to enrich the interactive experience, facilitating the understanding of complex information through visual and dynamic exploration.

Technical foundations of real-time rendering in a chat 🤔

This capability probably relies on generating code for standard web environments, such as WebGL or similar libraries. Gemini could produce a snippet that, when executed, initializes a 3D scene with rotation, zoom, and pan controls. The complexity lies in translating a textual description into coherent geometric parameters, materials, and lights, all within the security limits of a browser.

Goodbye to hours of modeling, hello to minutes of corrections 😅

The 3D community might view this with some irony. After years perfecting topology and UV mapping techniques, now an assistant generates a model with a couple of phrases. Of course, then you have to explain to it that tables don't usually have five legs and that the requested character needs an internal skeleton to animate. It's a great shortcut, but the devil is, as always, in the details of practical implementation.