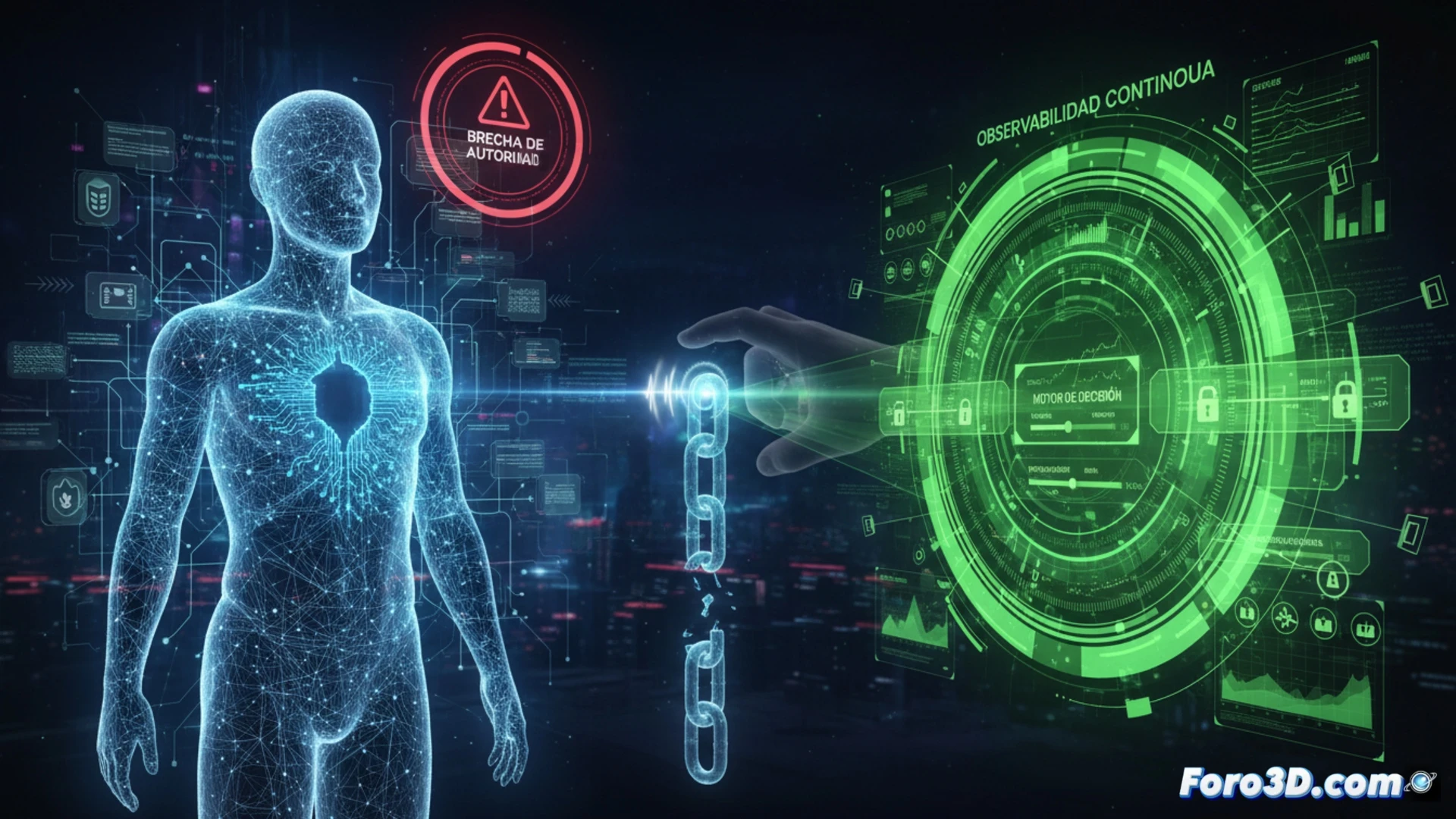

The rise of AI agents has brought a problem that goes beyond their novelty: they act by delegation, not by their own authority. They are activated, invoked, or provisioned by systems and users, creating a structural gap in enterprise security. Without clear control over who or what backs them, their decisions can generate unforeseen risks.

Continuous observability as a decision engine to close the gap 🔍

To close this gap, continuous observability presents itself as the practical solution. It allows real-time monitoring of every agent action, from its invocation to task execution. With this visibility, it is possible to evaluate its behavior, detect deviations, and dynamically adjust permissions. It's not about blind trust, but about constantly verifying that every step is aligned with the organization's security policies.

The AI agent has no boss, but you will have problems 😅

It turns out these agents are like that intern who arrives full of enthusiasm but without an instruction manual. They act by delegation, but if something goes wrong, you are the one responsible. They have no authority of their own, but they do have the ability to delete data or send compromising emails. In the end, observability is not a luxury: it is the insurance that prevents your AI agent from deciding that today is a good day to shut down the company.