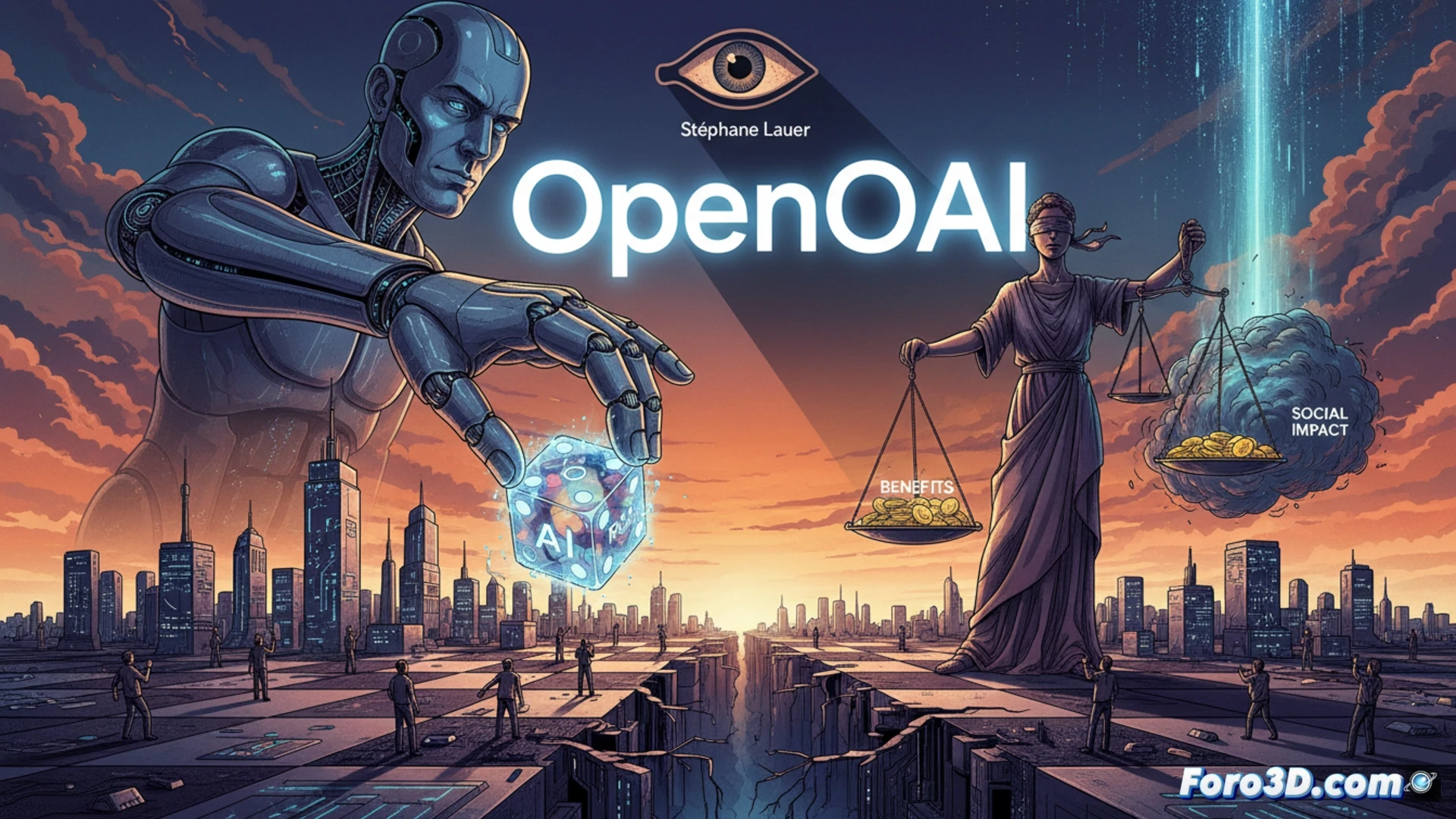

The advancement of artificial intelligence sparks a crucial debate about its governance. Stéphane Lauer, in a recent column, questions the ability of private companies like OpenAI to manage the social risks and distribute the benefits of this technology. The warning is clear: there is a danger in those who develop the tools also setting the rules of the game, acting as judge and jury in a domain of global impact.

The Architecture of Opacity and Centralized Control 🤖

The current development model is based on black box systems and massive computational resources, centralized in few entities. This concentration of technical capability and data creates a power asymmetry. The paradox is that the same teams that design complex algorithms and define the boundaries of their assistants are later the ones proposing ethical and safety frameworks. This lack of separation raises doubts about the transparency and the real incentives behind each technical or policy decision.

Trust Me, I'm a Wolf Building the Sheep Pen 🐺

The situation has a touch of a modern fable. The companies fiercely competing for market dominance suddenly don the robe of philosophers concerned with the common good. It's as if car manufacturers, after years of selling speed, volunteered to draft the traffic code, ensuring their most powerful models would have a special lane. A public relations strategy so brilliant that even their own AIs would sign off on it.